Component audits without chaos

Every growing codebase eventually reaches a point where nobody can confidently say how many button variants exist, which card components are actually in use, or why there are three different modal implementations. A component audit brings clarity to that situation, but only if the process itself does not create more disruption than the problem it aims to solve. This article walks through a practical audit process covering inventory, categorization, redundancy mapping, and consolidation, along with the common mistakes that turn audits into stalled initiatives. These techniques apply whether you are working toward a formal design system or simply trying to reduce the maintenance surface of an existing product. For more front-end guides, see the resources collection.

Why component audits matter

Component duplication is invisible until it becomes expensive. A second implementation of a dropdown menu does not break anything when it ships. It breaks things six months later when a designer updates the dropdown behavior and only one of the two implementations gets changed. Users see inconsistent interactions. Developers spend time debugging a component they did not even know existed. QA files tickets against the wrong implementation.

The cost is not just maintenance. Duplicate components inflate bundle size, create conflicting accessibility patterns, and make onboarding harder for new developers who have to learn which version to use in which context. An audit surfaces all of these issues at once, giving the team a complete picture of what exists before deciding what to do about it.

The key word is "before deciding." An audit is not a refactoring project. It is an information-gathering exercise. The inventory tells you what you have. The categorization tells you what it does. The redundancy map tells you what overlaps. Only after you have all three can you make good decisions about consolidation. Teams that skip the audit and jump straight to consolidation end up removing components that were actually needed in edge cases, or merging components that have subtly different requirements.

Building the component inventory

The inventory is a complete list of every component in the codebase. Not the components you think exist. Not the components in the Figma file. The actual components that are currently rendered in production. This distinction matters because most teams discover that their mental model of the codebase diverges significantly from reality.

Start by searching the codebase for component definitions. In a React project, that means finding every file that exports a function or class component. In a Vue project, look for single-file components. In a server-rendered project with partials, search for template includes. Use your IDE or a simple grep to generate the initial list.

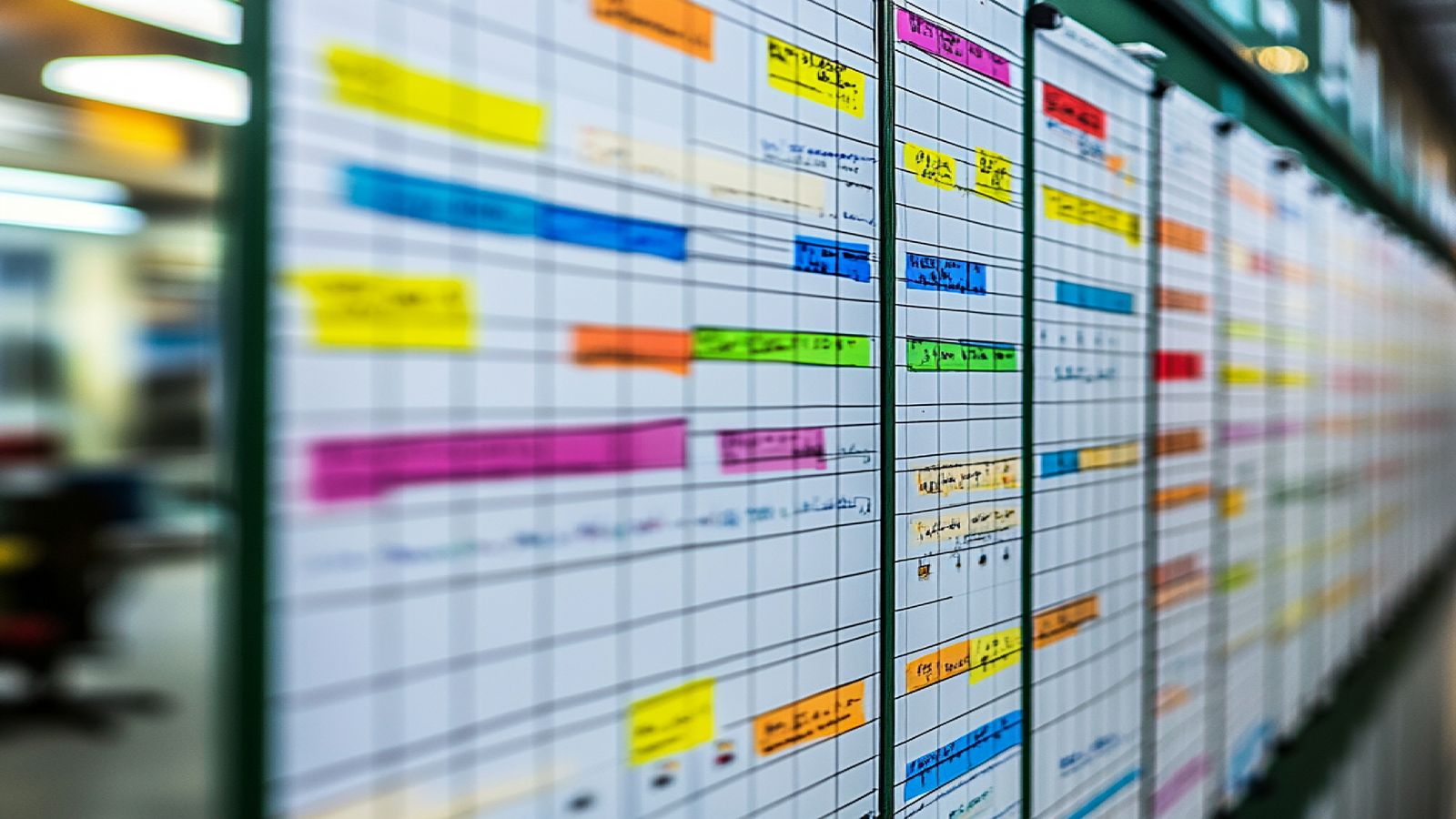

For each component, record the file path, the component name, the number of files that import it, and whether it accepts props or configuration options. A spreadsheet works fine for this. Do not build a custom tool. The goal is to finish the inventory in one or two days, not to engineer a perfect cataloging system.

Pay special attention to components that are defined inline rather than extracted into their own files. Many codebases contain buttons, badges, and small UI elements that are built inline inside larger page components. These inline components are easy to miss in an automated search but they represent a significant portion of the duplication problem. A manual pass through the five or six most complex pages usually surfaces the inline components that automated search misses.

When the inventory is complete, you should have a list of 50 to 300 components depending on the size of the project. Do not be alarmed if the number is higher than expected. Every project accumulates more components than anyone realizes. That is precisely why you are doing the audit.

Categorizing components by function

With the inventory complete, assign each component to a functional category. The categories should reflect what the component does, not where it lives in the file structure. Common categories include: navigation (headers, sidebars, breadcrumbs, tabs), data display (cards, tables, lists, badges, stats), data input (forms, text fields, selects, checkboxes, toggles), feedback (alerts, toasts, modals, progress indicators), layout (containers, grids, spacers, dividers), and media (images, videos, avatars, icons).

Some components will not fit neatly into a single category. A card component with an embedded form handles both data display and data input. Assign it to the category that best describes its primary purpose and add a note about the secondary function. Do not create overly specific categories to accommodate edge cases. Six to ten categories are enough for most projects.

Categorization reveals structural patterns that the raw inventory hides. You might discover that your project has 14 components in the "data display" category but only 2 in the "feedback" category. That imbalance tells you something about how the product was built: the team invested in content presentation but handled user feedback inconsistently, probably with ad hoc alert messages and console logs rather than a unified notification pattern.

This kind of structural insight is one of the most valuable outputs of an audit. It tells you not just what exists but what is missing. Gaps in component coverage are often the root cause of inconsistency, because developers fill gaps by improvising, and improvisation always produces divergent solutions. Formal governance practices can prevent these gaps from forming in future development cycles.

Mapping redundancy across components

Redundancy mapping is where the audit delivers its most actionable findings. Within each category, compare components side by side and identify groups that serve the same purpose. Two button components with different APIs but identical visual output are redundant. Three card layouts that differ only in padding values are redundant. A modal component and a dialog component that both render centered overlay panels are redundant.

Not all redundancy is bad. Sometimes two components look similar but serve genuinely different use cases. A compact data table for dashboards and a detailed data table for admin panels might share visual similarities but have different performance characteristics, different column behaviors, and different interaction patterns. Merging them would create a single component so loaded with conditional logic that it becomes unmaintainable. Mark these as "intentional variants" and move on.

The redundancies you want to target are the ones caused by unawareness. Developer A did not know Developer B had already built a tooltip component, so Developer A built another one. Neither developer was wrong. The process was wrong. The absence of a shared component catalog meant duplication was inevitable.

For each redundancy group, document which components are involved, how many places each is used, and how they differ. The differences matter because they will determine your consolidation strategy. Two button components that differ only in class names are easy to merge. Two button components that differ in event handling, accessibility attributes, and animation behavior require more careful reconciliation.

Consolidation without breaking production

Consolidation is the step where audits most commonly derail. The team has a clear redundancy map, everyone agrees that three modal components should become one, and then the work stalls because nobody can justify spending two sprints on refactoring when there are features to ship.

The solution is incremental consolidation tied to existing work. When a developer touches a file that imports a redundant component, they migrate that file to the canonical version as part of their change. No dedicated refactoring sprint. No separate ticket backlog. The migration happens gradually, attached to work the team was already doing.

To make this work, you need a clear "winner" for each redundancy group. Decide which component becomes canonical and document that decision. The canonical component should be the one with the most complete API, the best accessibility support, and the widest current usage. If none of the existing components is clearly superior, create a new canonical component that combines the best aspects of the redundant ones, and then migrate away from all of them.

Deprecation notices accelerate the process. Add a console warning to redundant components that says something like "This component is deprecated. Use CanonicalButton instead." The warning is visible in development, does not affect production, and creates gentle pressure to migrate without blocking any work in progress.

Using a well-structured design system like Solid makes this consolidation step significantly smoother, because the canonical component definitions and their API surfaces are already documented and tested.

Common mistakes in component audit processes

The most damaging mistake is treating the audit as a one-time event. An audit that produces a spreadsheet, triggers a few weeks of consolidation work, and then gets forgotten will need to be repeated a year later when the same duplication problems resurface. The audit should produce not just findings but ongoing practices: a shared component catalog, a review checklist for new components, and a team agreement on how to handle situations where an existing component almost but does not quite meet a new requirement.

Another common mistake is auditing visual appearance instead of component function. Two components can look identical and still be meaningfully different. A primary button and a call-to-action link might render the same blue rectangle, but one is a button element and the other is an anchor element. They have different semantic roles, different keyboard behaviors, and different accessibility expectations. Merging them based on appearance would create an element that is sometimes a button and sometimes a link, which is a well-known accessibility antipattern.

Scope creep is the third pitfall. An audit that starts as a component inventory and expands to include CSS methodology review, utility class rationalization, icon library consolidation, and design tool migration will never finish. Keep the scope tight: inventory the components, categorize them, map the redundancy, plan the consolidation. Everything else is a separate project.

Finally, do not underestimate the political dimension. Consolidation means telling some developers that the component they built will be replaced by someone else's version. Frame the conversation around the team's output, not individual contributions. The goal is a codebase that is easier for everyone to work in, not a judgment about whose button component was better.

Making audit results stick

The lasting value of a component audit is not the spreadsheet. It is the shared awareness of what the codebase contains and the team practices that prevent duplication from accumulating again. Three specific practices make audit results durable.

First, maintain a living component catalog. This does not need to be a custom-built tool. A markdown file in the repository that lists every canonical component with a one-line description and a file path is enough. Update it when components are added or removed. New developers read it during onboarding. Existing developers reference it before building something new.

Second, add a component check to your pull request template. A simple question: "Does this PR introduce a new UI component? If yes, is there an existing component that serves the same purpose?" This creates a moment of reflection before new components enter the codebase. It does not block anyone. It just makes the decision explicit.

Third, schedule a lightweight re-audit every six months. Not a full inventory. A quick review of the component catalog against the current codebase to catch any drift. Two hours of focused review every six months is dramatically cheaper than a full audit every two years.